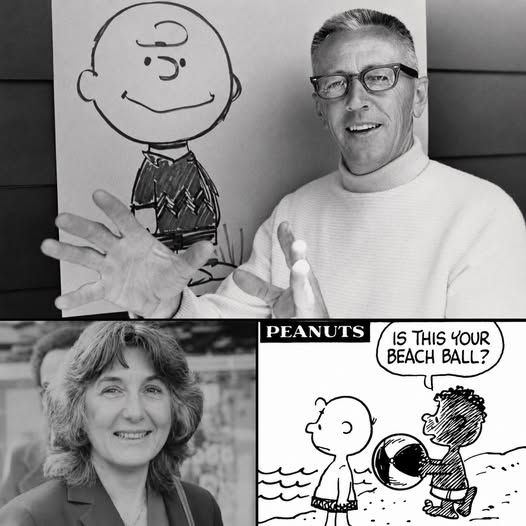

Eleven days after they killed Dr. King, a teacher sat down to force a Black child into America’s most famous comic strip. Harriet Glickman wrote Charles Schulz in 1968 and asked him to put a Black kid in Peanuts, where in eighteen years not one had ever appeared.

He almost said no, afraid that a white man drawing a Black child would look like pity. A hundred million readers, eighteen years, and the whole thing turned on one letter.

Eleven days after Dr. King was killed in Memphis, a schoolteacher in California sat down at her typewriter and wrote a letter to a cartoonist. She did not expect him to write back.

Her name was Harriet Glickman. She was forty-one, a mother of three living in the San Fernando Valley, and that spring she felt as powerless as everyone around her.

The country was coming apart. Cities were burning, the television was wall to wall with funerals, and a teacher in suburban Los Angeles kept asking herself what one ordinary person could possibly do.

She was not an activist.

She was a mother with a typewriter and a feeling she could not shake.

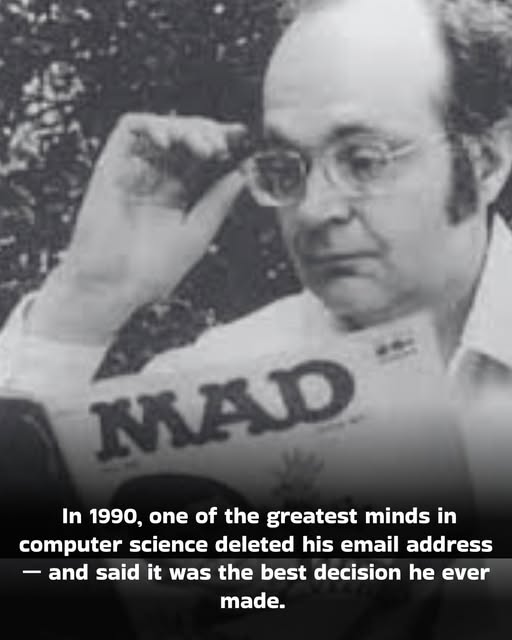

The man she wrote to was Charles Schulz. His comic strip, Peanuts, ran in around a thousand newspapers and reached close to a hundred million readers every week.

Charlie Brown, Lucy, Linus, Snoopy.

Eighteen years of that strip, going back to 1950, and not one of those children was Black.

Glickman had spent her life around children. As a teacher, she had watched something up close that stayed with her.

Black children and white children never saw themselves sitting side by side, not in school in the funny pages, not anywhere a child went looking for his own face.

So she said it plainly on the page. She wrote that since Dr. King’s death she had been asking what she could do about the “vast sea of misunderstanding, hate, fear and violence” that had swallowed the country.

She had actually sent the same idea to several cartoonists. Schulz was the one who wrote back.

That was the first surprise.

His reply was honest in a way that probably stung. He told her he had thought about putting a Black child in the strip, and that the idea frightened him.

Not because of his readers.

He was afraid of getting it wrong.

He worried it would come off like a white man patting Black families on the head, talking down to them. “I don’t know what the solution is,” he wrote, and left it right there.

A lot of people would have folded at that. A polite no from a famous man is an easy place to stop.

But Glickman wrote again, and Schulz answered again, and this time he sounded even more certain it was a mistake. He was sure that whatever he drew would come off as a white man being clumsy about something this raw.

Still she did not let it drop.

She wrote back and asked his permission to do one small thing.

She had no interest in speaking for Black people. So she asked if she could show his letter to some Black friends of hers, parents, and let them answer him in their own words.

Schulz said yes.

One of those friends was a man named Kenneth Kelly. He was a Black father of two young boys, and he was an engineer.

Not just any engineer.

Kelly worked on the Surveyor program, the unmanned American craft that was setting down on the surface of the moon.

Sit with that picture for a second. A Black man helping land a spacecraft on the moon took the time to write a cartoonist about whether a Black child could sit in a comic strip.

Kelly was patient with him. He told Schulz that no Black parent he knew would call the gesture condescending, and that even if a few did, it would be “a small price to pay” for what it would give their children.

What it would give them was not complicated. It was the simple sight of themselves, somewhere inside the ordinary American picture they were shut out of every single day.

Kelly even told him how to do it. Do not make the boy a hero, he suggested, and do not turn him into a lesson.

Just a regular kid, one of the gang, nothing special, simply there.

Years later, Kelly would spend himself fighting housing discrimination in his city. That summer, he changed a comic strip instead.

Another friend and parent, Monica Gunning, wrote to Schulz as well. The letters kept landing on his desk in Northern California, polite and unhurried and impossible to wave off.

All of this was happening while the year kept getting worse. In June, Robert Kennedy was killed in Los Angeles, Glickman’s own city, a few weeks after Kelly mailed his letter.

The country was taking blow after blow.

And in the middle of it, that quiet argument about a comic strip kept moving forward, one letter at a time.

Then, one day that summer, Schulz sent Glickman a short note. He told her to check her newspaper the week of July twenty-ninth, because he had drawn something he thought would please her.

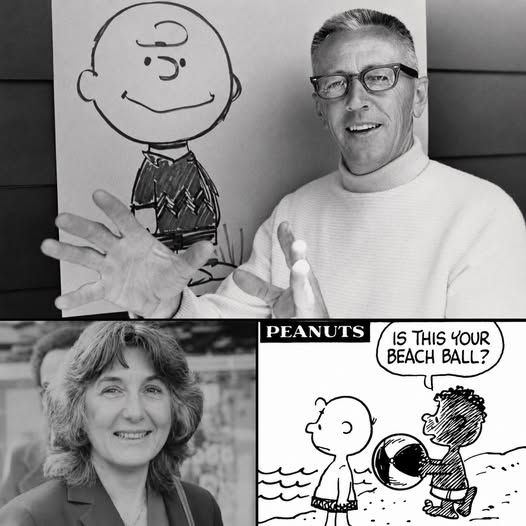

On July 31, 1968, Charlie Brown is standing on a beach, and he has lost his ball in the water. A boy he has never met before wades in and carries it back to him.

The boy’s name is Franklin. The two of them get to talking and build a sandcastle together, two children on a beach on a summer afternoon.

No speech. No halo.

No lecture about brotherhood, just a Black child being kind to Charlie Brown, printed in a thousand papers from coast to coast.

The strip would later show that Franklin’s father was a soldier serving in Vietnam. He was never written as a symbol.

He was somebody’s son.

When Franklin appeared, mail poured into Schulz’s office from all over the country. Most of it said the same simple thing, which was thank you.

It should have ended there, small and sweet. It did not.

When Schulz later drew Franklin in school, he sat him at a desk right in front of Peppermint Patty. A Black child and a white child, learning in the same room.

For one Southern newspaper editor, that was the line. He wrote to Schulz to say he did not mind a Black character, but please do not show the children in school together.

The man could accept Franklin existing in the strip.

He could not accept that child sharing a desk with a white girl.

This was 1968. Black children were walking into newly integrated schools behind federal marshals, and a grown man was objecting to a cartoon doing the very same thing.

Schulz had a decision to make, and he made it without any noise. Years later, asked what he had done about that complaint over the classroom, he gave a short answer.

It was five words. “I didn’t even answer him.”

He just kept drawing the two of them at the same desk.

Far off in Philadelphia, a six-year-old Black boy watched Franklin appear with no idea of the fight behind him. His name was Robb Armstrong.

That year had already taken something from him. His older brother had died thirty days before Franklin first turned up on that beach.

Thirty days.

A boy loses his brother, and a month later a new face shows up in the comics page he reads on the living room floor.

So here was a child who already knew the shape of a hole in a family. And then, right inside that grief, a Black kid walked into his favorite comic strip.

Robb looked at Franklin and thought one thing. “That’s like me.”

He had already told his mother, at three years old, that he was going to be a cartoonist.

Now he had proof there was room for him.

A Black boy could belong on the funny pages, because one already did.

That child grew up to become exactly what he had promised. Robb Armstrong created JumpStart, one of the most widely syndicated Black comic strips in the country.

And here is where the story closes a circle no one could have planned. Franklin, through all those decades, never had a last name.

In the 1990s, Charles Schulz picked up the phone and called Robb Armstrong. A special was in the works, every character needed a full name, and Schulz had just realized Franklin did not have one.

So he asked the grown man, the one who had once been that grieving six-year-old, whether he could borrow his name. Robb said yes right away.

That is why the first Black character in Peanuts is named Franklin Armstrong.

Armstrong called it the highest respect a person could be shown.

About the man who reached a lonely kid through a comic strip, he said it simply, “He inspired a kid.”

Harriet Glickman lived to be ninety-three. She died in March of 2020, in the same Sherman Oaks house where she had typed that letter more than fifty years earlier.

The letter outlived her. It rests now in the Charles M. Schulz Museum, the real page, her real words, dated eleven days after Dr. King was killed.

You can stand in front of it today, behind glass, and read the date typed across the top. April 15, 1968, mailed by a woman who was certain no one was listening.